How I Evaluate and Stabilize Distributed Systems

A practical framework for understanding, diagnosing, and hardening distributed systems before they scale.

Opening

Most distributed systems don’t fail because of obvious architectural mistakes.

They fail at the boundaries:

- Retries

- State ownership

- Workflow recovery

These issues don’t show up in diagrams. They show up under load, during partial failure, and in the gaps between services.

The Lens

When evaluating a system, the goal is not to understand how it is described but how it behaves under real conditions.

Where I look first:

- Retry loops that amplify failure (or back-pressure)

- Unclear or duplicated state across services

- Tight coupling that makes small changes risky

- Workflows that don’t recover cleanly from partial failure

Most systems become brittle as they become increasingly unclear.

The first objective is to make the system legible:

- Clarify service boundaries

- Make state ownership explicit

- Understand how workflows behave under failure

Once a system is legible, it becomes possible to reason about it.

Once it is understandable, it can be stabilized.

Designing Complex Distributed Systems

One of the more complex systems I’ve architected was the event-driven architecture behind Salesforce Einstein Bots, which evolved into a large-scale multi-service workflow system processing billions of events per month — documented in Building a Fault-Tolerant Data Pipeline for Chatbots.

At its core, the system coordinated:

- Real-time user interactions

- Asynchronous processing pipelines

- External API integrations

- Long-running, stateful workflows

Kafka-backed pipelines were used for real-time aggregation and signal processing, providing visibility into system behavior as it evolved.

The challenge was not just throughput, it was maintaining correctness and recoverability across long-running, stateful interactions.

Two key tradeoffs shaped the system.

Consistency vs Availability

The system favored eventual consistency with strong idempotency guarantees:

- Explicit retry strategies

- Deduplication at service boundaries

- Clearly defined state transitions

This improved resilience under failure, but required discipline to avoid inconsistent outcomes.

Centralization vs Decomposition

Early designs centralized too much orchestration, creating bottlenecks and limiting flexibility.

The system evolved toward event-driven decomposition:

- Services owned their own state

- Behavior emerged through event flow rather than centralized coordination

This improved scalability and separation of concerns, but increased the need for:

- Well-defined contracts (Avro/Protobuf schemas, idempotency guarantees at service boundaries)

- Observability

- End-to-end traceability across services

In practice, the hardest part was not building the initial pipeline, but making the system observable and operable at scale. Once bottlenecks are visible, the system becomes tractable.

What to Keep vs What to Change

When evaluating an existing system, I group components into three categories:

| Category | Characteristics | Action |

|---|---|---|

| Stable and well-bounded | Clear ownership, predictable behavior | Keep |

| Works today but structurally fragile | Retry, state, or boundary issues | Refactor incrementally |

| Fundamentally misaligned | Unclear ownership, hidden coupling, or over-centralized orchestration | Redesign |

Large rewrites are avoided unless the system is actively blocking progress.

Most systems improve significantly through:

- Tightening contracts

- Making state explicit

- Restructuring workflow boundaries

The priority is always:

- Make the system understandable

- Make it resilient under real conditions

Once those are in place, the system becomes much easier to evolve.

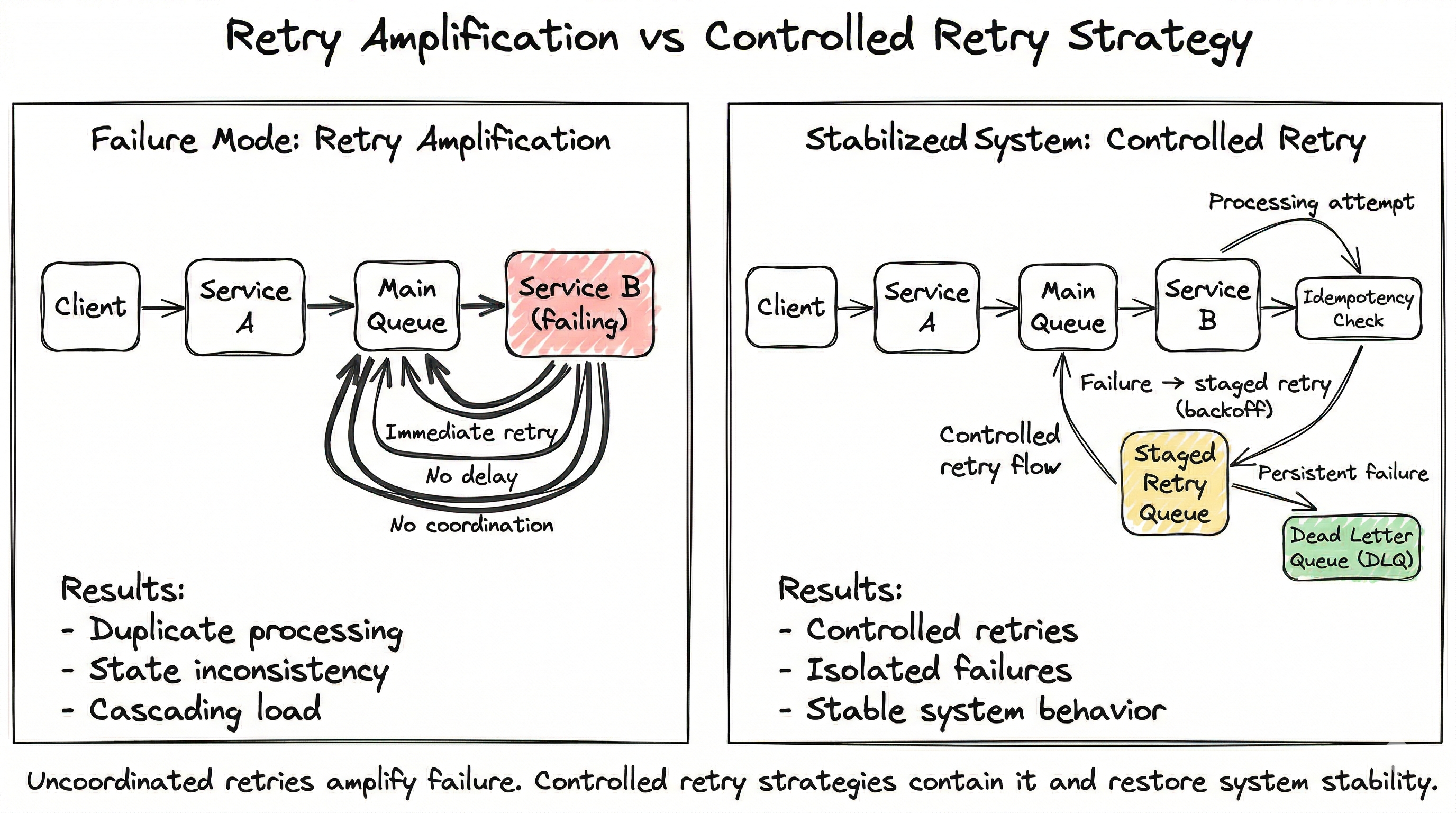

Failure Modes: When Retries Amplify Instability

A common failure pattern in event-driven systems is retry amplification.

In one system, a downstream service began intermittently failing under load.

Upstream services retried aggressively, re-enqueuing the same events multiple times.

Because idempotency was not consistently enforced:

- Duplicate events were processed as new work

- State became inconsistent

- Downstream systems were overloaded

- System latency degraded

The issue was not the initial failure but that the retry strategy amplified it.

The failure mode becomes clear when visualized:

Fundamental Improvements

- Idempotency enforced at all service boundaries

- Staged retry queues introduced with increasing delay intervals

- Dead-letter queues added to isolate persistent failures

- Retry policies tightened with exponential backoff and circuit breakers

- State transitions made explicit so workflows could resume safely

Observability was improved through:

- End-to-end tracing

- Correlation across services

The lesson

Retries are not a reliability mechanism on their own.

Without clear state management and idempotent boundaries, retries amplify failure instead of containing it.

Event-Driven Boundaries: Publisher vs Consumer

Validation, retries, and schema enforcement are shared responsibilities in event-driven systems, but not equally.

Publisher responsibilities

- Enforce schema and contract correctness

- Validate required fields (business logic)

- Prevent malformed events

- Handle short-lived transport retries

Consumer responsibilities

- Enforce semantic validation

- Ensure idempotency

- Control retry behavior

- Manage recovery logic

A message can be structurally valid but still be:

- Duplicated

- Out of order

- No longer relevant

The principle:

Publishers protect the contract. Consumers protect correctness.

Robust systems require both.

Documenting Systems That Weren’t Built by You

In some cases, I’ve designed and built the system from the ground up, as with the event-driven architecture behind Einstein Bots.

But more often, the challenge is stepping into an existing system: one with history, partial understanding, and evolving constraints.

In those situations, documentation is not the starting point. Reconstruction is.

The system is examined from multiple perspectives:

- Code structure

- Service boundaries

- Runtime behavior

- Infrastructure

- Data flow

- End-to-end workflows

The goal is to understand:

- Who owns state

- Where sources of truth live

- Where synchronous vs asynchronous transitions occur

Documentation is built in layers:

System map

High-level view of services, integrations, queues, and boundaries

Workflow traces

End-to-end flows including:

- Happy paths

- Failure paths

- Retries

- Recovery behavior

Contracts

- Event schemas

- Payloads

- Ownership boundaries

- Validation responsibilities

Operational model

- Deployment strategy

- Environment differences

- Observability

- Failure modes

Documentation is validated by walking it with engineers familiar with the system.

Because documentation is only useful if it helps someone reason about the system under pressure.

Selected Work

The patterns described here are drawn from production systems built and operated at scale.

-

Building a Fault-Tolerant Data Pipeline for Chatbots

Event-driven pipeline design for conversational systems, including retry strategies, failure isolation, and large-scale processing.

Salesforce Engineering -

Building a Scalable Event Pipeline with Heroku and Salesforce

Distributed event pipeline architecture, focusing on scalability, reliability, and system boundary design.

Salesforce Engineering

Closing

The goal is not to describe the system, but to make the system and its design legible enough that a team can evolve it safely.

If you’re working on a system like this and want a second set of eyes before scaling, reach out.